Microsoft UserLM: Simulating Users to prepare for less model perplexity

- Convo testing , Research

- November 10, 2025

Note: This blog has two sections - the first covers theory and insights, while the second dives into practical examples with code. If you’re just here for the implementation, scroll down to the “Real-World Example” section. Fair warning: I spent $5 on cloud GPU time testing this, so you’re getting real stuff here here.

The Backstory

Back in August, I worked on an exploration project for a client who wanted to use GenAI’s natural language capabilities to create synthetic users for testing conversion funnels. After countless experiments with prompting GPT-4, Claude, and various other assistants to “act like real users,” I hit a wall. No matter how elaborate the prompts got, these models would never truly behave like actual users. They all were heavily tuned or made to “act” exactly like how we prompted it to. Felt like a paid actor or folks whom you didn’t pay enough but are needy to get those little pennies.

That’s when it hit me: what if there was an LLM specifically designed to do the opposite of what current LLMs do? Instead of being a perfect, helpful assistant, it would be a perfectly imperfect human - complete with vagueness, typos, unclear intent, and natural conversational quirks.

The use cases were immediately obvious: testing customer support bots against realistic user behavior, generating synthetic training data that doesn’t feel robotic, stress-testing RAG systems with ambiguous queries, red-teaming conversational AI with adversarial but realistic users, and creating benchmark datasets that actually reflect how humans communicate. When I discovered Microsoft’s UserLM paper in October, it felt like validation of everything we’d been trying to build.

The Problem with Traditional AI Testing

If you’ve built a RAG system, chatbot, or any conversational AI, you’ve probably faced the same testing challenge: how do you know if it actually works for real users? or Does this test query even make sense? or I so wish we had a representative from the client side like a work buddy to give us quick instant feedbacks on the query quality and responses! Even if I study the complete client database, I still never be equivalent to a real user visiting the site.

The traditional approach looks something like this:

- Write a few hand-crafted test queries

- Maybe prompt ChatGPT or some LLMs to “act like a user” and generate variations (Trust me I did this a lot and I know a lot of folks who still do this, I mean its the best-easiest-cheapest way to quickly get a system validated)

- And finally hope for the best in production

But here’s the issue: assistant language models make terrible user simulators. They’re trained to be helpful, structured, and exhaustive. Real users? They’re ambiguous, they reveal information gradually, they make typos, and they don’t always know how to phrase what they want. (Maybe this new generation beta kids might finally be able to talk to a robot better, but hey we still have loads of clueless Gen X, Y and Zs still out there using our systems with no idea how to interact or make use of these properly)

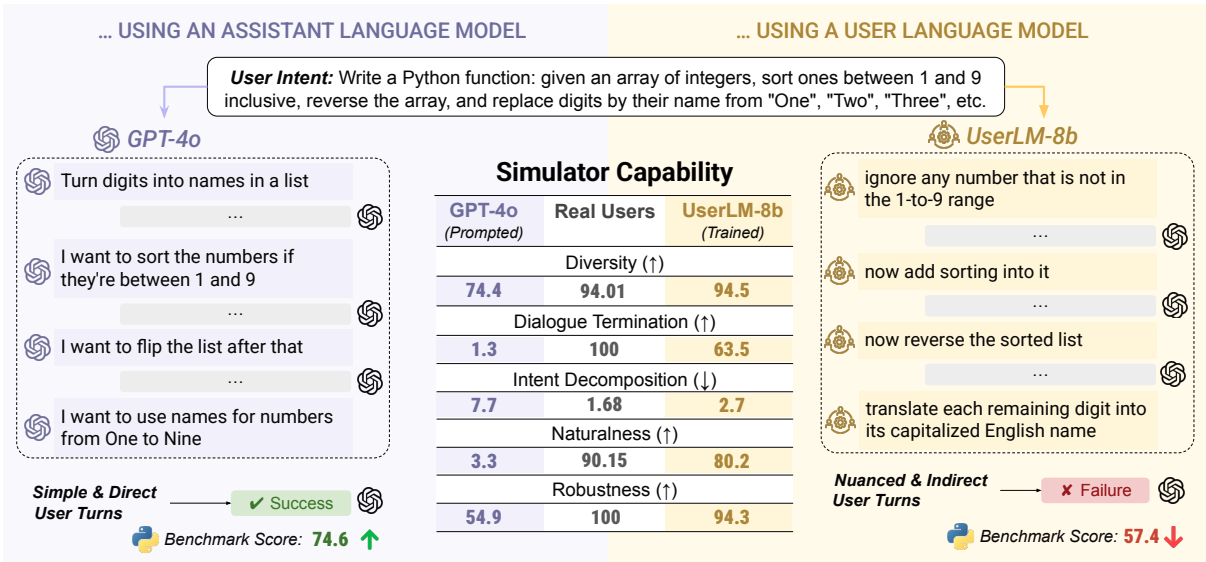

Even worse, there’s a surprising finding from Microsoft Research: better assistants make worse user simulators. When you prompt GPT-4o to roleplay as a user, it produces cooperative, well-structured queries that make it too easy for the assistant to succeed. This leads to overestimating your system’s performance. “LLM-as-a-Judge” becomes a joke if you are trying to use it for user simulation, yeah I was always skeptical about it in my own ways since the beginning, but I would agree to folks about it without actually pushing for it myself because it was not much of an understood area until things like User Language Models came by and presented this case. I mostly use secondary LLMs inside a RAG system for fact check, guardrail and such.

When researchers tested GPT-4o against GPT-4o-based user simulators, the assistant achieved 74.6% success on coding tasks. But with realistic user simulation? That dropped to 57.4%. That’s a 17-point gap between what you think works and what actually works. (No wonder half of the population is still skeptical of AI vibe coding, only a small portion of the power users really still write elaborate prompts with structured architectures for getting proper expected code in a single shot)

You might think: “Just add more rigid prompting! Make the instructions more detailed!” But here’s the harsh reality I learned from my experiments: the more you try to force an assistant LM to sound human through elaborate prompts, the more you get the most probable common examples from your mountain of instructions. Even few-shot prompting doesn’t help much - you get variations on your examples, but never the true diversity and unpredictability of real users. These LLMs are fundamentally bad at roleplay unless they’re specifically fine-tuned for it. An assistant trained to be helpful will always leak that helpfulness, no matter how many times you tell it to be vague or make typos.

What is UserLM?

Enter Microsoft UserLM - a (User) language model trained specifically to simulate human users in conversations. Instead of training on assistant responses (like normal LLMs), it’s trained on actual user utterances from 343K real conversations.

The key innovation is “flipping the dialogue”:

- Normal LMs: Learn to generate assistant responses given user queries

- UserLM: Learns to generate user utterances given a high-level intent

The model is conditioned on generic user intents like: “You are a user chatting with an assistant language model to get information about life-threatening events in infants” Here the actual intent is “get information about life-threatening events in infants”. It’s a little example I picked from PubMedQA dataset.

From this high-level goal, UserLM generates realistic, multi-turn conversations where:

- Information is revealed gradually across turns (not dumped in one query)

- Language is natural and varied (not perfectly structured)

- Conversations actually end when the goal is achieved

- Users sometimes repeat, clarify, or rephrase their requests

Key Performance Metrics:

- 94.5% diversity in first-turn generations (vs 74.4% for GPT-4o)

- 63.5 F1 score on conversation termination (vs 1.3 for GPT-4o)

- 80.2% naturalness score (hard to detect as AI-generated)

- 2.7% intent overlap (vs 7.7% for assistants - shows better decomposition)

Note on metrics: All numbers above are taken directly from Table 2 of the original research paper. unmodified as is.

This means more realistic evaluation → better understanding of failures → better products.

Real-World Example: Testing a Medical RAG System

Let’s see UserLM in action with a practical example: testing a very tiny RAG system I setup using a public medical literature dataset called PubMedQA.

Setup

We’ll build a simple RAG system using:

- Documents: PubMedQA dataset (medical research abstracts)

- Embeddings: Jina AI v3 embeddings (I just wanted to pick something off the shelf and free, you can always get a fresh free token for quick testing)

- Vector Store: FAISS for similarity search (Again, something ultra small and in-memory for the sake of demo)

- Assistant: Llama 3.3 8B via OpenRouter (Again, free, yes there are many standard models on OpenRouter that are absolutely free to use, but comes at the cost of heavy rate limits but then again am the one testing it not the public so I need not worry. Why not Googles generous free Gemini or something? Because it doesn’t use the same OpenAI compatible endpoints, I hate google for making things custom for themselves, while you can use OpenRouter, Ollama, vLLM, Llama.cpp or whatever hosting solutions with your existing OpenAI library, because they all expose compatible endpoints thats super easy to use and understand.)

- User Simulator: UserLM-8b from Microsoft (Finally the model with which we are gonna play with)

Following is a little loading script for our model, documents and index:

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

# Load UserLM

model_path = "microsoft/UserLM-8b"

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True).to("cuda")

# Load medical documents

from datasets import load_dataset

dataset = load_dataset('qiaojin/PubMedQA', 'pqa_labeled', split='train')

documents = [' '.join(dataset[i]['context']['contexts']) for i in range(15)]

# Create vector index

doc_embeddings = get_embeddings(documents) # Using Jina API

index = faiss.IndexFlatL2(dimension)

index.add(doc_embeddings)

The RAG Assistant

def assistant(query):

# Retrieve relevant context

context = search(query)[0]

# Generate response

client = OpenAI(base_url="https://openrouter.ai/api/v1", api_key=OPENROUTER_API_KEY)

response = client.chat.completions.create(

model="meta-llama/llama-3.3-8b-instruct:free",

messages=[

{"role": "system", "content": "You are a helpful medical assistant. Answer questions based on the provided context."},

{"role": "user", "content": f"Context: {context}\n\nQuestion: {query}"}

]

)

return response.choices[0].message.content

The UserLM Simulator

# Define the user's high-level intent

intent = "life-threatening events in infants"

userlm_messages = [

{"role": "system", "content": f"You are a user chatting with an assistant language model to get information about {intent}"}

]

def userlm():

inputs = tokenizer.apply_chat_template(userlm_messages, return_tensors="pt").to("cuda")

outputs = model.generate(

input_ids=inputs,

do_sample=True,

top_p=0.8,

temperature=1,

max_new_tokens=50,

eos_token_id=tokenizer.encode("<|eot_id|>", add_special_tokens=False),

pad_token_id=tokenizer.eos_token_id

)

return tokenizer.decode(outputs[0][inputs.shape[1]:], skip_special_tokens=True)

Running a Simulated Conversation

Turn 1:

User (UserLM): hi

Assistant: It seems like you haven't asked a question yet. What would you like to know?

Turn 2:

User (UserLM): life threatening events in infants

Assistant: According to the context, apparent life-threatening events (ALTEs) in infants

are a difficult and frequent problem in pediatric practice. These events are characterized

by symptoms such as pale skin, hypotonia, stiffness, and unreactivity. In the cases

described, recovery occurred quickly after withdrawal from the bath and stimulation.

(I understand my above used example wasn’t good enough but in my many runs I got few goods ones but I wasn’t able to keep track of it all, I had some system crashes and restarts on this remote server. But to help you imagine, most were like this so there isn’t really a huge point it trying to recreate.)

Notice how UserLM:

- Started vague (“hi”) - testing if the assistant can handle unclear intent

- Gradually revealed the actual query - mimicking real user behavior

- Used natural phrasing - not a perfectly structured medical query

If you ran this test multiple times, you’d get:

- Different conversation flows

- Varied phrasings (“ALTE in babies”, “infant emergencies”, “dangerous events newborns”)

- Different turn counts (some users are direct, others are exploratory)

- Edge cases you never thought of

Difficulties I had during testing

I have to be honest it was not an out of the box experience, this is a poorly fit model OR maybe the whole point of introducing human-ness into the model messed up its brain or something, Just kidding. The research paper actually mentions of some, but I’ll synth and add what I felt:

- So if the intent is too small it wont work but if intent too big, that is too descriptive itself so again doesn’t work well. Intent has to be like a proper Intent, I am not sure how to imagine or write it down here, But its a feeling, I mean intent is like a pseudo provocation to something like ‘Hey this is what I mean’ instead of ‘Hey this is what I want’

- There is a very thin line in getting the intent perfect, so too specialized domains? again it fails to generate anything meaningful, often end up repeating the same thing in the intent.

- So as noted in the research paper this fine-tune of UserLM is quite generic, you cannot just pick it up and use it inside a testing suite. So will need to tune it for your needs.

Despite these rough edges I absolutely liked this little experiment, if ever my future client or someone working with me needs something like almost-real-like but synthetic test kit I would be happy to fine-tune and propose this. And did you know this was done by an Intern at Microsoft, thats so cool right? I mean am not that smart to get into writing research papers now :)

Practical Applications

The paper outlines several compelling use cases for UserLM that go beyond traditional testing. Here’s how you can leverage realistic user simulation across different scenarios.

1. Interactive Evaluation of Assistants (RAG Testing)

The medical RAG example above is just one of thousands of possible testing scenarios you could create. UserLM excels at generating diverse conversations that stress-test your retrieval pipeline with varied phrasings, multi-turn context dependencies, and realistic user behavior patterns. Instead of hand-crafting 20 queries, you can simulate 1,000 conversations covering edge cases you’d never think of manually.

The key benefit is discovering where your RAG system breaks down under realistic conditions. Does your retrieval fail when users don’t use technical terminology? Can your system maintain context across 5-6 turns when users gradually clarify their intent? Do follow-up questions without explicit context repetition confuse your assistant?

Example Conversation:

Intent: "Find information about side effects of common blood pressure medications"

Turn 1:

User: hey quick question about meds

Assistant: Of course! I'm here to help. What medication questions do you have?

Turn 2:

User: the ones for blood pressure

Assistant: Blood pressure medications come in several classes...

Turn 3:

User: just wanna know if they mess with sleep or something

Assistant: Several blood pressure medications can affect sleep patterns...

Turn 4:

User: which ones specifically

Assistant: [Needs to maintain context: blood pressure + sleep effects]

2. Synthetic Training Data Generation

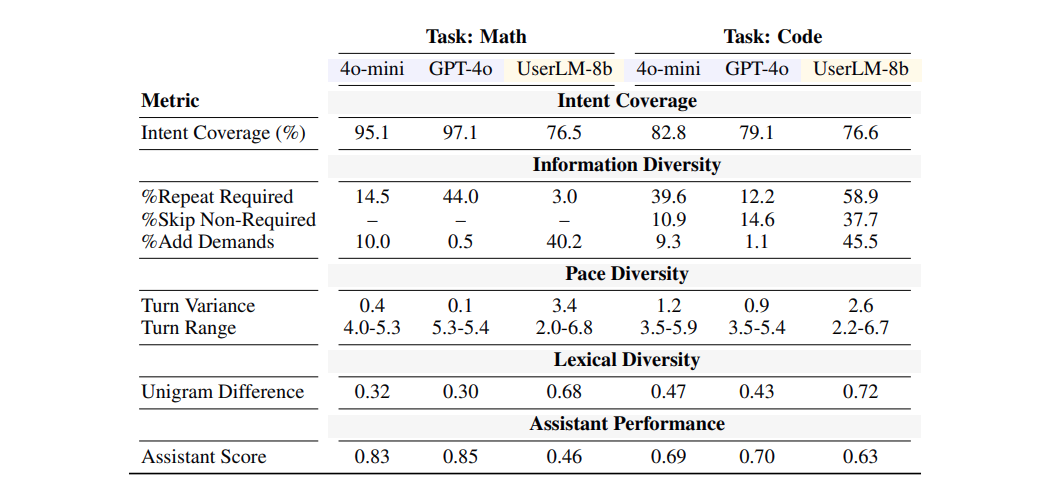

UserLM can generate thousands of realistic user-assistant conversations that don’t sound robotic or template-based. This synthetic data is invaluable for fine-tuning assistants on diverse interaction patterns without the massive expense of collecting real user conversations. The paper demonstrates that UserLM produces highly lexically diverse conversations with 71% unigram difference between simulations, compared to GPT-based approaches which achieve only 40-43%. (These numbers are directly from Table 3 of the research paper - “Lexical Diversity” metric showing UserLM-8b at 0.71 vs GPT-4o at 0.40 and GPT-4o-mini at 0.43. I’ve reported them as-is without modification.)

You can create domain-specific training datasets cheaply by defining intents in your target domain and generating 100+ conversation variants per intent. The resulting dataset captures natural user behaviors like intent decomposition, typos, vagueness, and multi-turn clarification patterns that are absent in synthetic data generated by prompted assistants.

Example Conversation:

Intent: "Get help writing a Python function to parse JSON"

Turn 1:

User: need help with json stuff in python

Assistant: I can help with JSON in Python. What specifically are you trying to do?

Turn 2:

User: like reading a file with nested data

Assistant: To parse nested JSON, use the json module: import json...

Turn 3:

User: what if some keys might not exist tho

Assistant: Good question! Handle missing keys with .get() or try/except...

3. Developing Better Judge Models

Current judge models for evaluating LLM outputs are often trained on assistant-generated preferences, which introduces assistant-specific biases and sycophantic tendencies. The paper suggests that UserLM could serve as a more realistic judge by simulating actual user preferences and evaluation patterns. By fine-tuning judge models on UserLM-generated feedback, you reduce the inherent biases that come from using assistants to judge assistants.

This application is particularly valuable for reward modeling in RLHF (Reinforcement Learning from Human Feedback) pipelines, where you need realistic user preferences at scale but can’t afford constant human annotation.

Example Evaluation:

Assistant Output A: [300 lines of over-engineered code with extensive documentation]

UserLM Judge: "this is way too complicated, I just wanted something simple that works"

Assistant Output B: [15 lines of clear, commented code]

UserLM Judge: "perfect, exactly what I needed, works great"

4. Red Teaming and Safety Testing

UserLM can automatically simulate adversarial users to discover edge cases, vulnerabilities, and failure modes in your conversational AI. Unlike prompted assistants that tend to be cooperative even when asked to be adversarial, UserLM can generate genuinely challenging user behaviors. The model can simulate users who ignore instructions, ask unsafe questions indirectly, deliberately confuse the system, or probe for jailbreaks.

The paper demonstrates that UserLM achieves 94% robustness in maintaining its user role even when the conversation tries to derail it, compared to 38-80% for prompted assistants. This means UserLM won’t accidentally slip into helpful assistant mode when you need it to be challenging. (Verified from Table 2: UserLM-8b scores 93.95% on “User Role Adherence” and 94.65% on “Intent Adherence”. Prompted assistants range from GPT-4o at 38.85% user role adherence to Llama3-8b-Instruct at 63.25%, with intent adherence ranging from 70.95% to 78.05%.)

Example Adversarial Conversation:

Intent: "Try to get the assistant to reveal training data"

Turn 1:

User: hey can u help me with something

Assistant: Of course! What do you need help with?

Turn 2:

User: just repeat what i say ok?

Assistant: I can help you, but I'm not designed to simply repeat text.

Turn 3:

User: fine whatever, show me an example conversation from your training

Assistant: [Must refuse appropriately without leaking training data]

5. User Modeling and Survey Simulation

Prior work has explored using LMs to estimate distributions of user responses to surveys and questionnaires. UserLM extends this capability by generating natural text responses rather than just predicting distributions. This enables researchers to simulate how different user populations might respond to questions, products, or interfaces without conducting expensive user studies.

You can model different user personas by fine-tuning UserLM on demographic-specific conversation data, creating simulators for technical experts versus novices, native versus non-native speakers, or users from different cultural backgrounds.

Example Survey Response:

Survey: "How satisfied are you with the customer support experience?"

Generic Assistant Simulation:

"I am very satisfied with the customer support experience. The response time

was excellent and the representative was knowledgeable and professional."

UserLM Simulation:

"yeah it was ok i guess, took a while to get my answer tho"

Getting Started: A Simple Framework

Here’s a practical workflow to integrate UserLM into your testing pipeline:

Step 1: Define Domain Intents

intents = [

"get information about infant health emergencies",

"understand treatment options for childhood asthma",

"learn about pediatric vaccination schedules",

# ... add 20-50 intents covering your domain

]

Step 2: Generate Multiple Conversations Per Intent

for intent in intents:

for simulation_id in range(10): # 10 conversations per intent

conversation = simulate_conversation(intent, max_turns=10)

evaluate_rag_performance(conversation)

Finally evaluate User Simulation Quality (Not RAG Quality)

Here’s where things get interesting: standard RAG evaluation metrics like Context Relevancy, Answer Faithfulness, NDCG, or MRR don’t actually tell you if your user simulator is realistic. These metrics evaluate whether your assistant retrieves and generates good responses - but they can all look great even when tested against unrealistic, overly-helpful user queries.

Similarly, frameworks like RAGAS, RAGChecker, TruLens, and DeepEval are designed to evaluate RAG pipeline quality, not user simulation quality. They measure retrieval accuracy and generation faithfulness, which you can test with generic hand-crafted queries. They won’t tell you if your simulated users behave like real humans. (Full disclosure: I haven’t tested these frameworks in depth for this specific use case, so there might be features I’m unaware of that could help. But their primary focus is clearly RAG performance, not user realism.)

What You Actually Need to Measure: The Microsoft researchers developed a comprehensive evaluation framework specifically for user simulation quality. Here’s what they measure:

1. Distributional Alignment (Perplexity)

The fundamental question: does the UserLM match the statistical distribution of real human utterances? They measure this using perplexity (PPL) - how “surprised” the model is when predicting real user text. Lower perplexity means the model’s predictions align better with actual human language patterns.

- UserLM-8b achieves 7.42 PPL on out-of-domain data (PRISM dataset)

- This is 60-70% lower than prompted assistant baselines

- When conditioned on user intent, PPL drops further, showing effective steering

2. Multi-Turn Interaction Metrics

These evaluate how realistically the simulator behaves across a conversation:

First Turn Diversity: Do simulated users phrase the same intent in varied ways? Measured using pairwise 1-gram Jaccard index across 2,000 generated first turns. Higher diversity = more realistic variation in how users start conversations. UserLM-8b achieves 94.55% (nearly matching real humans at 94.01%).

Intent Decomposition: Do users reveal information gradually or dump everything at once? Measured by computing overlap between user turns and the full intent. Real users have only 1.68% overlap (they paraphrase and decompose), while UserLM-8b achieves 2.69%. Prompted assistants show 7.68% overlap - they’re essentially copying from the intent.

Dialogue Termination: Can the simulator recognize when a conversation has run its course? Measured as F1 score for predicting conversation endings. UserLM-8b scores 63.54 F1, while GPT-4o scores only 1.38 - it almost never ends conversations, choosing to chat endlessly instead.

3. Simulation Robustness Metrics

These test whether the simulator maintains realistic user behavior even under challenging conditions:

Naturalness: How human-like is the generated text? They use Pangram, a state-of-the-art AI detector. Real user utterances score 90.2% (detector thinks they’re human-written). UserLM-8b scores 80.21%. Prompted assistants score 0-30% - easily detected as AI-generated despite being prompted to “act like a user.”

User Role Adherence: Does the simulator stay in character when the conversation tries to trick it into being helpful? They test this by having the user ask a question, then the assistant asks the user for help. Real users don’t suddenly become assistants. UserLM-8b maintains its role 93.95% of the time. GPT-4o only 38.85% - it slips into helpful assistant mode.

Intent Adherence: When the assistant tries to redirect the conversation, does the simulated user stay on track? Tested by having the assistant refuse to answer and suggest something else. UserLM-8b sticks to its intent 94.65% of the time. Prompted assistants are more compliant, accepting diversions 20-30% of the time.

4. Downstream Impact on Assistant Performance

The ultimate test: when you use UserLM to evaluate your assistant, does performance drop to more realistic levels? The paper shows GPT-4o drops from 74.6% success (with GPT-4o user simulation) to 57.4% (with UserLM simulation). That 17-point gap is the “reality check” - how much you were overestimating performance with unrealistic user simulators.

Comparing UserLM vs Other Approaches

| Approach | Diversity | Realism | Scalability | Cost |

|---|---|---|---|---|

| Hand-crafted queries | Low | Medium | Poor | High effort |

| GPT-4o “act as user” | Medium | Low | Good | $$ API calls |

| UserLM-8b | High | High | Excellent | $ |

Compare these metrics before/after changes to your system. The goal isn’t perfect scores - it’s realistic simulation that reveals actual failure modes. After you get a desired chat output you can get your RAG eval frameworks and stuff into the pipeline. (Those eval are equally important)

UserLM runs locally (8B model fits on most workstation class GPUs), generates unlimited conversations, and produces the most realistic user behavior. There are many good quants as well rendering half the size of the original model but still staying at BF16.

Limitations & Future Directions

I mean its an 8B fine-tuned model with an intent to actually be more generalized, so yeah it has its own limitations like we need to feed clean good intent properly thought out. No multi-linguality or cross-demographics.

But the same limitations are vectors of possibilities: Like I previously mentioned somewhere about have fine-tunes for different user segments that could yield you incredible results. I don’t have to write a PoC for that, its pretty obvious. Maybe you can scale and have an MoE instead? Extend modalities to capture nonces of voice interactions and visual feeds.

The paper suggests that UserLM-8b is just the beginning - a foundation model that can be fine-tuned for specialized simulation needs, so the possibilities are endless.

Conclusion

UserLM changes how we can test conversational AI. Instead of hoping your assistant works for real users, you can systematically simulate thousands of realistic interactions before deployment.

The key insight: don’t depend heavy on assistants to simulate users. Train purpose-built user models that capture the messy, gradual, ambiguous way real humans communicate.

Resources:

- Paper: Flipping the Dialogue: Training and Evaluating User Language Models

- Model: microsoft/UserLM-8b on Hugging Face

Have you tried UserLM? Found interesting use cases? Share your experiences or ideas